Complaints About Safety and Lack of Consent

According to a recent article from Consumer Reports, their car safety experts and some academic engineering experts are concerned that Tesla’s FSD Beta Software used on public roads lacks safeguards, and that it is putting others at unnecessary risk as Tesla continues to use vehicle owners as beta testers for its new features.

Beta testers play an important role for Tesla as it attempts to gain momentum not just toward interest in their vehicles like the recently released Model S Plaid, but also toward acceptance that EVs with full self-driving capabilities are the next big leap in automotive tech…and safety.

There are numerous videos demonstrating just how well Tesla FSD operates in real life traffic posted by Tesla owners/enthusiasts that are remarkably impressive. However, there has also been some negative publicity involving both real and questionable accidents as well as at least one Tesla owner arrested for breaking California law by riding in the back seat rather than remaining in control of his Tesla while under Autopilot. And, not a few quirks found with Autopilot including a headlight high beam issue.

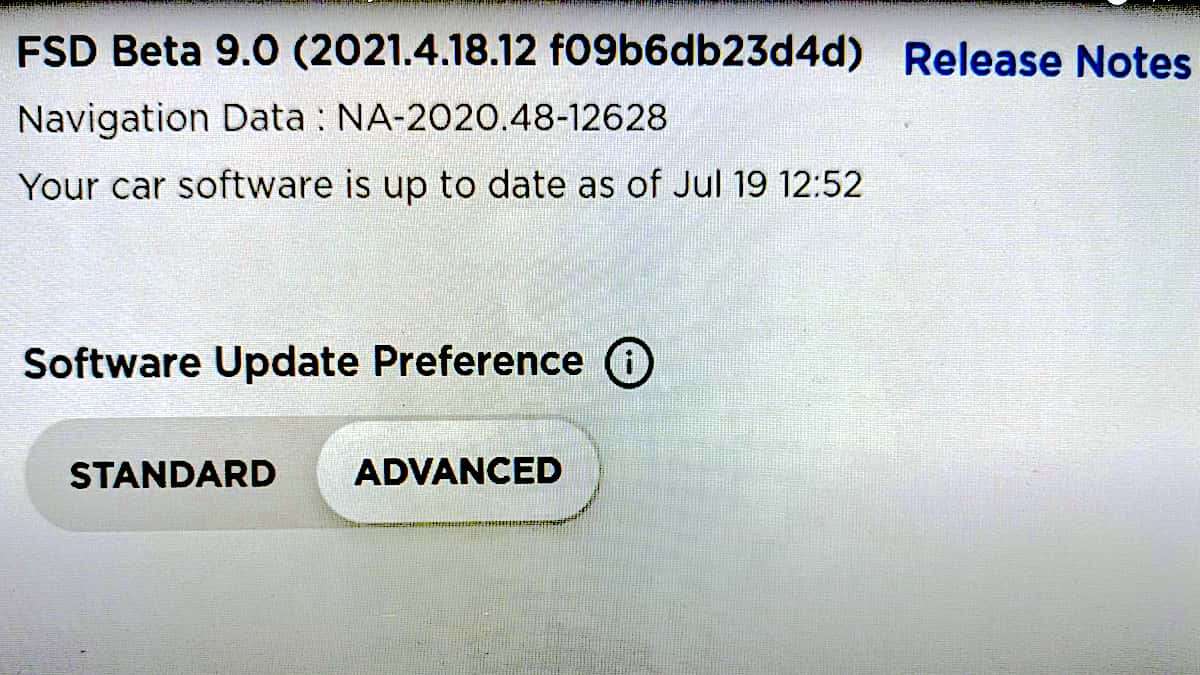

Recently, the latest update to Tesla’s FSD suite known as “FSD Beta 9” was released and a whirlwind of new videos showcasing Beta 9’s magic have been downloaded to YouTube. However, according to Consumer Reports, their experts have been paying close attention to those videos and are finding faults that include:

• Missing turns

• Scraping against bushes

• Heading toward parked cars.

• Taking the wrong lane after a turn

• “Meandering” from side to side like a drunk driver

In fact, Consumer Reports writes that, “Selika Josiah Talbott, a professor at the American University School of Public Affairs in Washington, D.C., who studies autonomous vehicles, said that the FSD beta 9-equipped Teslas in videos she has seen act ‘almost like a drunk driver,’ struggling to stay between lane lines. ‘It’s meandering to the left; it’s meandering to the right,’ she says. ‘While its right-hand turns appear to be fairly solid, the left-hand turns are almost wild.’”

While the popularity of FSD in Tesla is fueled by it’s fanbase, safety experts at CR point out that their faith in FSD Beta 9 is not warranted and that their fervor for FSD is driving insufficiently proven tech to be released too soon.

“Videos of FSD beta 9 in action don’t show a system that makes driving safer or even less stressful,” says Jake Fisher, senior director of CR’s Auto Test Center. “Consumers are simply paying to be test engineers for developing technology without adequate safety protection.”

Consumer Reports safety experts are not alone in this view and point to other experts who state that much of the public is unaware that they’re part of an ongoing experiment that they did not consent to.

“While drivers may have some awareness of the increased risk that they are assuming, other road users—drivers, pedestrians, cyclists, etc.—are unaware that they are in the presence of a test vehicle and have not consented to take on this risk,” stated Bryan Reimer, a professor at MIT and founder of the Advanced Vehicle Technology (AVT) consortium, a group that researches vehicle automation.

CR points out that in comparison to other developers of self-driving car technology, such as Argo AI, Cruise, and Waymo, that Tesla’s approach uses its fans as test drivers on public streets whereas the aforementioned companies limit their software testing to private courses and/or use trained safety drivers as monitors. Moreover, safety experts point out that at the very least, Tesla needs to insure that their drivers are actually paying attention to the road at all times.

“Tesla just asking people to pay attention isn’t enough—the system needs to make sure people are engaged when the system is operational,” says Fisher, who recommends that Tesla use in-car driver monitoring systems to ensure that drivers have their eyes on the road. “We already know that testing developing self-driving systems without adequate driver support can—and will—end in fatalities.”

As an example of such a fatality, CR writes:

An Uber self-driving test vehicle struck and led to the death of 49-year-old Elaine Herzberg in 2018 as she crossed a street in the Phoenix area. An investigation found that the driver monitor in the back seat of the test vehicle was distracted at the time and did not override the system to stop. A federal investigation partially blamed insufficient regulation and inadequate safety policies at Uber for the collision. After that tragedy, many companies enacted stricter internal safety policies for testing self-driving systems, such as monitoring drivers to ensure that they aren’t distracted and relying more on simulations and closed test tracks.

FSD Is Not Here Yet

To most, seeing those YouTube videos is believing. However, to more critical and trained eyes, FSD is not there yet as Consumer Reports acknowledges from other experts:

“It’s hard to know just by watching these videos what the exact problem is, but just watching the videos it’s clear (that) it’s having an object detection and/or classification problem,” says Missy Cummings, an automation expert who is director of the Humans and Autonomy Laboratory at Duke University in Durham, N.C. In other words, she says, the car is struggling to determine what the objects it perceives are or what to do with that information, or both.

According to Cummings, it may be possible for Tesla to eventually build a self-driving car, but the amount of testing the automaker has done so far would be insufficient to build that capacity within the existing software. “I’m not going to rule out that at some point in the future that’s a possible event. But are they there now? No. Are they even close? No.”

An Attitude Fix Toward a Safer FSD Approach

The problem may lie in that Tesla has taken a Beta version approach that while is good for Apple, is the wrong one for Tesla when it comes to public safety. CR’s interview with Cummings says that Silicon Valley ethos is wrong for Tesla.

“It’s a very Silicon Valley ethos to get your software 80 percent of the way there and then release it and then let your users figure out the problems…And maybe that’s okay for your cell phone, but it’s not okay for a safety critical system,” stated Cummings.

Another point made is that Tesla has not made enough effort to educate its fans, and might be misleading them with marketing terms such as “Full Self-Driving,” that could provide a false impression with many owners excited by the new tech that the vehicles can drive without human intervention.

While developers of other automated driving development would not comment directly on how they feel about Tesla’s approach to testing out their FSD product, they appear to all share a commonality of taking their time, ensuring what works works, and doing so under controlled conditions without putting the public at risk. More specifically, using trained safety drivers and closed test courses to validate vehicle software before testing on public roads.

Can or will Tesla undergo an attitude change toward their approach? The experts did not sound hopeful about this happening and indicate that it is another case of the law lagging behind the technology. It will likely take regulation to enforce change, but will it be too little, too late?

“Car technology is advancing really quickly, and automation has a lot of potential, but policymakers need to step up to get strong, sensible safety rules in place,” says William Wallace, manager of safety policy at CR. “Otherwise, some companies will just treat our public roads as if they were private proving grounds, with little holding them accountable for safety.

For more about how other experts feel about how Beta 9 handles, check out this article and video featuring automotive expert Sandy Munro. In addition, here is a Tesla Vision No Radar Road Test Challenge that recently showcased how well Tesla FSD is doing without radar.

Coming Up Next: The Most Discounted New Cars to Buy Right Now

Timothy Boyer is Torque News Tesla and EV reporter based in Cincinnati. Experienced with early car restorations, he regularly restores older vehicles with engine modifications for improved performance. Follow Tim on Twitter at @TimBoyerWrites for daily Tesla and electric vehicle news.

Comments

So, Musk goes from Level 2

Permalink

So, Musk goes from Level 2 Autopileup to Level 1 Full Self Destruction, saves money on radar, and charges $10,000 for the privilege of a future promise that will more likely end up costing you your life than achieving Level 3 performance. Level 3 will soon be available in compact lidar systems costing well under $1,000.

LOL. CR are a bunch of

Permalink

LOL. CR are a bunch of shills. Let's think about this. If they are concerned about a few hundred Teslas using FSD Beta and Tesla not doing 'enough' to ensure those drivers pay attention, how do they feel about the other 250 million cars on US roads WITHOUT ANY ADAS? Without ADAS, the car is way more likely to be in an accident if the driver gets distracted or does not pay attention. Shouldn't CRs concern be with the 250+ million vehicles on the road and the manufacturers not doing anything about those drivers paying attention when they have no assistance and thus INCREASED chance of accidents if they don't pay attention?

I think CR brings up an important point. Ford, GM, Toyota, Honda, VW, etc ALL should immediately start putting massive effort into making sure the owners of the hundreds of millions of their cars all over the world pay attention 100% of the time without any distractions because those cars have NO ADAS and thus much more likely to cause an accident when the driver is distracted.

Actually, the statistics bears this out. about 3500 people die every day on roads globally because legacy manufacturers have neglected their duty in ensuring drivers pay attention 100% of the time. This is shocking that CR and other safety advocates has been so totally absent and quiet on this.

Can you please reach out to CR and ask them about this? Think about it. Riding a tricycle is much more stable and a parent would be less concerned about his child falling of it. Riding a bicycle has much less assistance and thus a parent will have more concern. Why is CR showing concern over the tricycle and not the bicycle???

Always when I read these

Permalink

Always when I read these skewed perspectives I think about the regular, human drivers that I encounter on the roads every day. The worst of them drive irrationally and impatiently, cutting people off. With so many others who are not paying attention, either texting, or otherwise distracted. Or tired or under the influence of drugs or alcohol? Should we all be warned about those human drivers who regularly pose a risk to our lives on the road? The "other self driving" systems are comparatively limited. Sure they watch the human drivers more, but they have a very narrow scope of use, being geomapped, or otherwise limited to only point-to-point travel in a small area, or just on certain freeways. It can't be ignored that Tesla has taken on the greater challenge of having driving assist anywhere, which is far from simple. But they are quickly evolving and improving FSD daily. The CEO of Consumer Reports just came from being on the board of directors of Ford Motor Company, and you can see her bias against Tesla shown in a rash of attacking articles like these. Masked as impartial reviews.

Thank you CR for the Tesla

Permalink

Thank you CR for the Tesla FSD reality check.

Unfortunately, the Internet fanboys will have their say, loudly, and deny all.

Prepare for the legal, financial, and cultural chaos to come.