Tesla Vision and Blind Corner Problem

Earlier we posted a recent video demonstrating how well Tesla Auto Pilot operates under Tesla Vision without radar under common commute conditions such as driving home from work on a busy interstate and back roads. Aside from some screen glitches involving semis sharing the road, Tesla Vision worked admirably well and required no driver intervention.

As any work in progress, however, the camera system in Tesla vehicles have had some bugs. Such as, with the high beam function controlling its headlights, and detection avoidance when a pulled over vehicle is partially overlapping the roadway. To adjust for potential and current problems, multiple common---and some unusual---scenarios are used for tweaking Tesla Level 5 FSD.

In fact, as explained by a recent article about Tesla’s neural net development in FSD, reportedly it relys on over 15 million miles worth of data to identify and create scenario simulations to test the latest versions of FSD. However, while awaiting the repeatedly promised Beta 9 version of FSD, users of FSD have questions about some real-life scenarios they face themselves with the current version of Auto Pilot and FSD when it comes to how Tesla handles blind corners.

A Blind Corners Fix…or Two

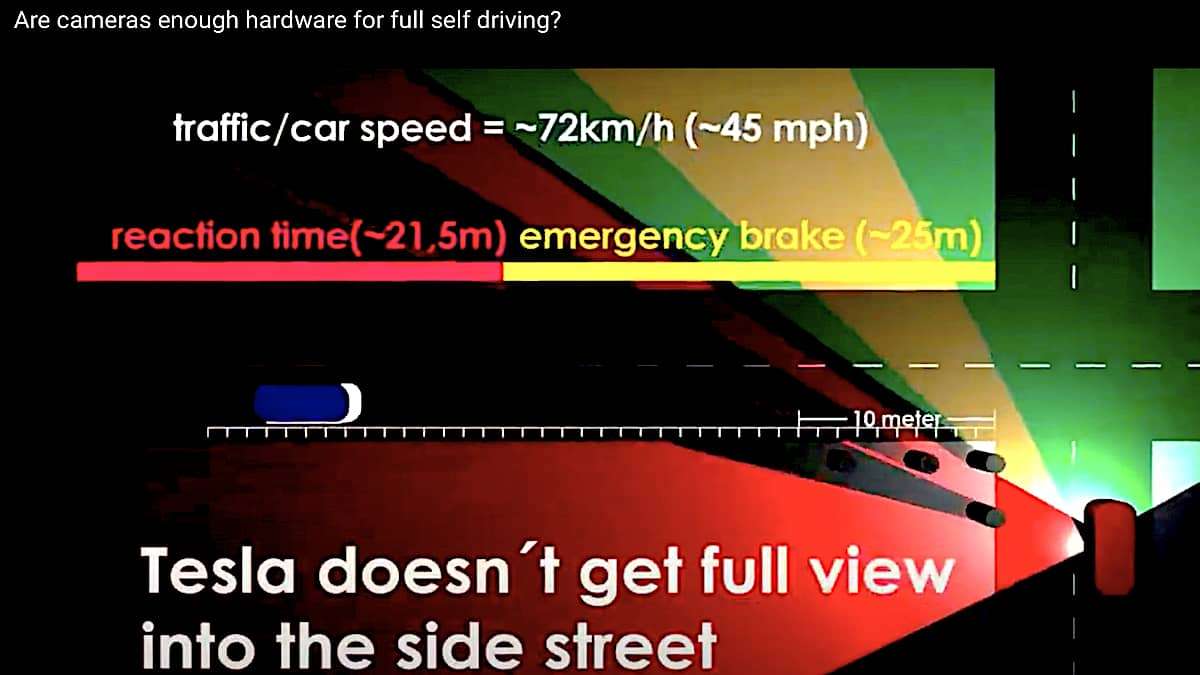

Today, a new YouTube channel was posted that addresses the blind corner problem and asks whether the 8 cameras as oriented on Tesla vehicles are enough hardware to qualify as true full self-driving that is safer than relying on our senses.

Here’s the video that explains the problem and a surprisingly simple solution that could help FSD become markedly safer.

Are Cameras Enough Hardware for Full Self Driving?

For more about Tesla Vision as it continues to develop, be sure to watch for additional articles from Torque News in the near future such as this one on Tesla Battery tech.

Timothy Boyer is Torque News Tesla and EV reporter based in Cincinnati. Experienced with early car restorations, he regularly restores older vehicles with engine modifications for improved performance. Follow Tim on Twitter at @TimBoyerWrites for daily Tesla and electric vehicle news.

Set Torque News as Preferred Source on Google